Cloud Trace: Node.js Client

Node.js Support for StackDriver Trace

A comprehensive list of changes in each version may be found in the CHANGELOG.

- Cloud Trace Node.js Client API Reference

- Cloud Trace Documentation

- github.com/googleapis/cloud-trace-nodejs

Read more about the client libraries for Cloud APIs, including the older Google APIs Client Libraries, in Client Libraries Explained.

Table of contents:

Quickstart

Before you begin

- Select or create a Cloud Platform project.

- Enable the Cloud Trace API.

- Set up authentication with a service account so you can access the API from your local workstation.

Installing the client library

npm install @google-cloud/trace-agentWarning

cloud-trace-nodejsis in maintenance mode. This means that we'll continue to fix bugs add add security patches. We'll consider merging new feature contributions (depending on the anticipated maintenance cost). But we won't develop new features ourselves.In particular, we will not add support for new major versions of libraries.

We encourage users to migrate to OpenTelemetry JS Instrumentation instead.

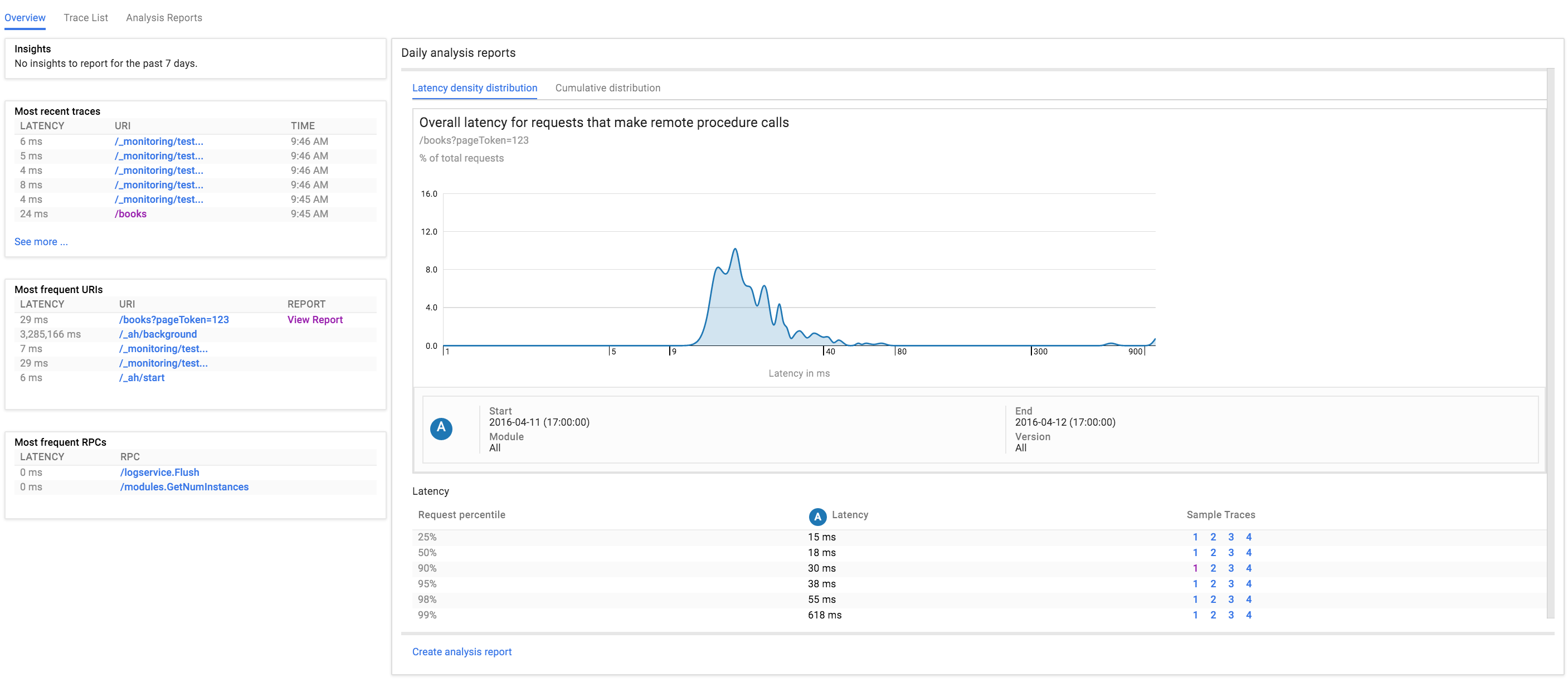

This module provides automatic tracing for Node.js applications with Cloud Trace. Cloud Trace is a feature of Google Cloud Platform that collects latency data (traces) from your applications and displays it in near real-time in the Google Cloud Console.

Usage

The Trace Agent supports Node 8+.

Note: Using the Trace Agent requires a Google Cloud Project with the Cloud Trace API enabled and associated credentials. These values are auto-detected if the application is running on Google Cloud Platform. If your application is not running on GCP, you will need to specify the project ID and credentials either through the configuration object, or with environment variables. See Setting Up Cloud Trace for Node.js for more details.

Note: The Trace Agent does not currently work out-of-the-box with Google Cloud Functions (or Firebase Cloud Functions). See #725 for a tracking issue and details on how to work around this.

Simply require and start the Trace Agent as the first module in your application:

require('@google-cloud/trace-agent').start();

// ...If you want to use import, you will need to do the following to import all required types:

import * as TraceAgent from '@google-cloud/trace-agent';

Optionally, you can pass a configuration object to the start() function as follows:

require('@google-cloud/trace-agent').start({

samplingRate: 5, // sample 5 traces per second, or at most 1 every 200 milliseconds.

ignoreUrls: [ /^\/ignore-me/ ] // ignore the "/ignore-me" endpoint.

ignoreMethods: [ 'options' ] // ignore requests with OPTIONS method (case-insensitive).

});

// ...The object returned by start() may be used to create custom trace spans:

const tracer = require('@google-cloud/trace-agent').start();

// ...

app.get('/', async () => {

const customSpan = tracer.createChildSpan({name: 'my-custom-span'});

await doSomething();

customSpan.endSpan();

// ...

});What gets traced

The trace agent can do automatic tracing of the following web frameworks:

- express (version 4)

- gRPC server (version ^1.1)

- hapi (versions 8 - 19)

- koa (version 1 - 2)

- restify (versions 3 - 11)

The agent will also automatically trace RPCs from the following modules:

- Outbound HTTP requests through

http,https, andhttp2core modules - grpc client (version ^1.1)

- mongodb-core (version 1 - 3)

- mongoose (version 4 - 5)

- mysql (version ^2.9)

- mysql2 (version 1)

- pg (versions 6 - 7)

- redis (versions 0.12 - 2)

You can use the Custom Tracing API to trace other modules in your application.

To request automatic tracing support for a module not on this list, please file an issue. Alternatively, you can write a plugin yourself.

Tracing Additional Modules

To load an additional plugin, specify it in the agent's configuration:

require('@google-cloud/trace-agent').start({

plugins: {

// You may use a package name or absolute path to the file.

'my-module': '@google-cloud/trace-agent-plugin-my-module',

'another-module': path.join(__dirname, 'path/to/my-custom-plugins/plugin-another-module.js')

}

});This list of plugins will be merged with the list of built-in plugins, which will be loaded by the plugin loader. Each plugin is only loaded when the module that it patches is loaded; in other words, there is no computational overhead for listing plugins for unused modules.

Custom Tracing API

The custom tracing API can be used to create custom trace spans. A span is a particular unit of work within a trace, such as an RPC request. Spans may be nested; the outermost span is called a root span, even if there are no nested child spans. Root spans typically correspond to incoming requests, while child spans typically correspond to outgoing requests, or other work that is triggered in response to incoming requests. This means that root spans shouldn't be created in a context where a root span already exists; a child span is more suitable here. Instead, root spans should be created to track work that happens outside of the request lifecycle entirely, such as periodically scheduled work. To illustrate:

const tracer = require('@google-cloud/trace-agent').start();

// ...

app.get('/', (req, res) => {

// We are in an automatically created root span corresponding to a request's

// lifecycle. Here, we can manually create and use a child span to track the

// time it takes to open a file.

const readFileSpan = tracer.createChildSpan({ name: 'fs.readFile' });

fs.readFile('/some/file', 'utf8', (err, data) => {

readFileSpan.endSpan();

res.send(data);

});

});

// For any significant work done _outside_ of the request lifecycle, use

// runInRootSpan.

tracer.runInRootSpan({ name: 'init' }, rootSpan => {

// ...

// Be sure to call rootSpan.endSpan().

});For any of the web frameworks for which we provide built-in plugins, a root span is automatically started whenever an incoming request is received (in other words, all middleware already runs within a root span). If you wish to record a span outside of any of these frameworks, any traced code must run within a root span that you create yourself.

Accessing the API

Calling the start function returns an instance of Tracer, which provides an interface for tracing:

const tracer = require('@google-cloud/trace-agent').start();It can also be retrieved by subsequent calls to get elsewhere:

// after start() is called

const tracer = require('@google-cloud/trace-agent').get();A Tracer object is guaranteed to be returned by both of these calls, even if the agent is disabled.

A fully detailed overview of the Tracer object is available here.

How does automatic tracing work?

The Trace Agent automatically patches well-known modules to insert calls to functions that start, label, and end spans to measure latency of RPCs (such as mysql, redis, etc.) and incoming requests (such as express, hapi, etc.). As each RPC is typically performed on behalf of an incoming request, we must make sure that this association is accurately reflected in span data. To provide a uniform, generalized way of keeping track of which RPC belongs to which incoming request, we rely on async_hooks to keep track of the "trace context" across asynchronous boundaries.

async_hooks works well in most cases. However, it does have some limitations that can prevent us from being able to properly propagate trace context:

- It is possible that a module does its own queuing of callback functions – effectively merging asynchronous execution contexts. For example, one may write an http request buffering library that queues requests and then performs them in a batch in one shot. In such a case, when all the callbacks fire, they will execute in the context which flushed the queue instead of the context which added the callbacks to the queue. This problem is called the pooling problem or the user-space queuing problem, and is a fundamental limitation of JavaScript. If your application uses such code, you will notice that RPCs from many requests are showing up under a single trace, or that certain portions of your outbound RPCs do not get traced. In such cases we try to work around the problem through monkey patching, or by working with the library authors to fix the code to properly propagate context. However, finding problematic code is not always trivial.

- The

async_hooksAPI has issues tracking context aroundawait-ed "thenables" (rather than real promises). Requests originating from the body of athenimplementation in such a user-space "thenable" may not get traced. This is largely an unconventional case but is present in theknexmodule, which monkeypatches the Bluebird Promise's prototype to make database calls. If you are usingknex(esp. therawfunction), see #946 for more details on whether you are affected, as well as a suggested workaround.

Tracing bundled or webpacked server code.

unsupported

The Trace Agent does not support bundled server code, so bundlers like webpack or @zeit/ncc will not work.

Samples

Samples are in the samples/ directory. Each sample's README.md has instructions for running its sample.

| Sample | Source Code | Try it |

|---|---|---|

| App | source code |  |

| Snippets | source code |  |

The Cloud Trace Node.js Client API Reference documentation also contains samples.

Supported Node.js Versions

Our client libraries follow the Node.js release schedule. Libraries are compatible with all current active and maintenance versions of Node.js. If you are using an end-of-life version of Node.js, we recommend that you update as soon as possible to an actively supported LTS version.

Google's client libraries support legacy versions of Node.js runtimes on a best-efforts basis with the following warnings:

- Legacy versions are not tested in continuous integration.

- Some security patches and features cannot be backported.

- Dependencies cannot be kept up-to-date.

Client libraries targeting some end-of-life versions of Node.js are available, and

can be installed through npm dist-tags.

The dist-tags follow the naming convention legacy-(version).

For example, npm install @google-cloud/trace-agent@legacy-8 installs client libraries

for versions compatible with Node.js 8.

Versioning

This library follows Semantic Versioning.

This library is considered to be in preview. This means it is still a work-in-progress and under active development. Any release is subject to backwards-incompatible changes at any time.

More Information: Google Cloud Platform Launch Stages

Contributing

Contributions welcome! See the Contributing Guide.

Please note that this README.md, the samples/README.md,

and a variety of configuration files in this repository (including .nycrc and tsconfig.json)

are generated from a central template. To edit one of these files, make an edit

to its templates in

directory.

License

Apache Version 2.0

See LICENSE