Run Keras models in the browser, with GPU support using WebGL

Run Keras models in the browser, with GPU support provided by WebGL 2. Models can be run in Node.js as well, but only in CPU mode. Because Keras abstracts away a number of frameworks as backends, the models can be trained in any backend, including TensorFlow, CNTK, etc.

Library version compatibility: Keras 2.1.2

Interactive Demos

Check out the demos/ directory for real examples running Keras.js in VueJS.

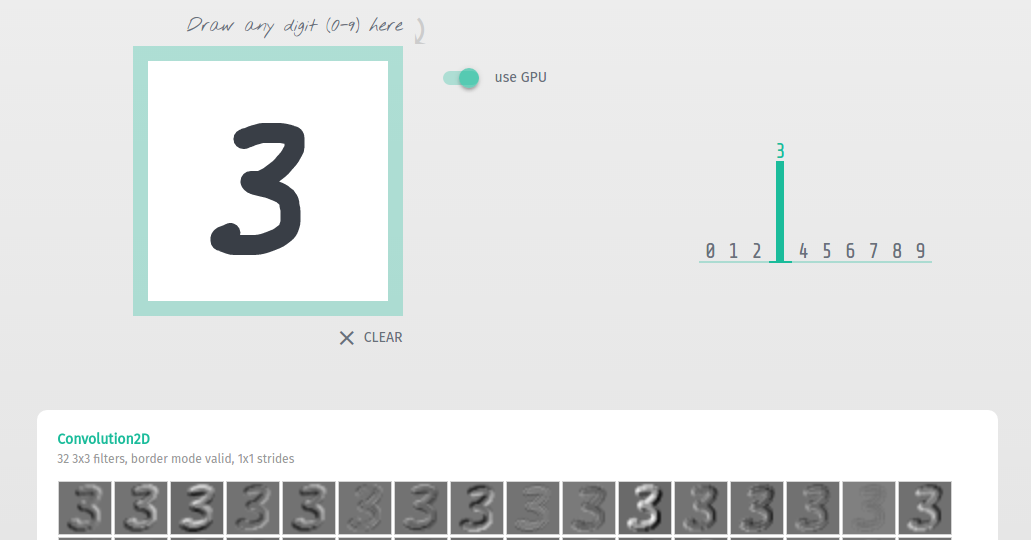

- Basic Convnet for MNIST

- Convolutional Variational Autoencoder, trained on MNIST

- Auxiliary Classifier Generative Adversarial Networks (AC-GAN) on MNIST

- 50-layer Residual Network, trained on ImageNet

- Inception v3, trained on ImageNet

- DenseNet-121, trained on ImageNet

- SqueezeNet v1.1, trained on ImageNet

- Bidirectional LSTM for IMDB sentiment classification